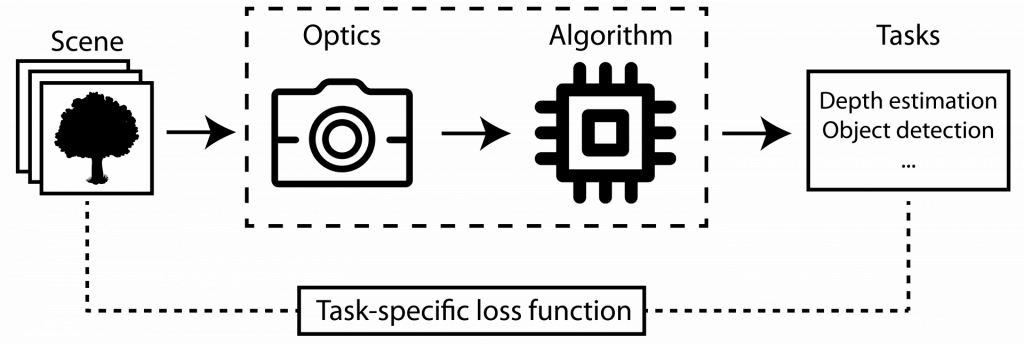

Computational imaging systems involve both optics and algorithm designs. Instead of optimizing these two components separately and sequentially, we treat the entire system as one neural network and develop an end-to-end optimization framework. Specifically, the first layer of the network corresponds to physical optical elements, and all subsequent layers represent the computational algorithm. All the parameters are learned based on task-specific loss over a large dataset. Such a learning-based framework can potentially go beyond the limits imposed by model-based methods and create better sensor systems.

Yicheng Wu, Vivek Boominathan, Huaijin Chen, Aswin Sankaranarayanan, Ashok Veeraraghavan

IEEE International Conference on Computational Photography (ICCP) 2019

Huaijin Chen*, Suren Jayasuriya*, Jiyue Yang, Judy Stephen, Sriram Sivaramakrishnan, Ashok Veeraraghavan, Alyosha Molnar (* = joint first authors)

IEEE International Conference on Computer Vision and Pattern Recognition (CVPR) 2016